Overview Of The SPP Architecture

This section gives an overview of the SPP architecture. It describes the key hardware and software features that make it possible to support the main abstractions provided to an SPP slice/user:

- Slice

- Fastpath

- Meta-Interface

- Packet queue and scheduling

- Filter

Coupled with these abstractions are the following system features:

- Resource virtualization

- Traffic isolation

- High performance

- Multi-Protocol support

These features allow the SPP to support the concurrent operation of multiple high-speed, virtual routers and allows the user to add support for new protocols. For example, one PlanetLab user could be forwarding IPv4 traffic while a second one could be forwarding I3 traffic. Meanwhile a third user could be programming the SPP to support MPLS.

We begin with a very simple example of an IPv4 router to illustrate the SPP concepts. Then, we briefly review the SPP's main hardware components. Finally, we describe the architectural features in three parts. The first two parts emphasize the virtualization feature of the SPP while the third part emphasizes the extensibility of the SPP. Part I describes how packets travel through the SPP assuming that it has already been configured with a fastpath for an IPv4 router. Part II describes what happens when we create and configure the SPP abstractions (e.g., create a meta-interface and bind it to a queue) for the router in Part I. Part III sketches how the example would be different if the router handled a simple virtual circuit protocol along with IPv4.

Contents

IPv4 Example

We begin with a simple example of two slices/users (A and B) concurrently using the same SPP as an IPv4 router (R). As shown in the figure (right), A's traffic is between the endhosts H1 and H3, and B's traffic is between H2 and H4. Traffic will travel over multiple Internet hops from an endhost to router R. Also, H1 and H2 are in the same stub network, and H3 and H4 are in the same stub network. (Note: The IP addresses in the figure were chosen for notational convenience and do not refer to existing networks.) Furthermore, both slices want 100 Mb/s of bandwidth in and out of R. We have purposely elected to make the logical views of the two slices as similar as possible to show how the SPP substrate can host this virtualization.

From a logical point of view, each user of router R needs a configuration (right) which includes, as a minimum, one fastpath consisting of three meta-interfaces (m0-m2), four queues (q0-q3), and six filters (f0-f5). Meta-interface m0 goes to R itself; m1 to H1 and H2; and m2 to H3 and H4.

|

|

The configuration for both slices will reflect these properties of the example:

- Both slices are concurrently using the same SPP;

- They have identical service demands;

- But they are communicating between different pairs of hosts (and socket addresses); and

- The SPP provides fair sharing of its bandwidth.

The differences will be reflected in each slice's configuration of R. The configuration of the two slices will be identical except for the following:

- The UDP port numbers of their meta-interfaces will be different so that the SPP can segregate traffic from the two slices coming in the same physical interface.

- Some fields in the filters will reflect the different socket addresses used at the endhosts.

Slices A and B will have the logical views shown in the tables (right). Note the following:

- The total bandwidth of the meta-interfaces (202 Mb/s) can not exceed the bandwidth of the fastpath (FP).

- There should be atleast one queue bound to each meta-interface (MI).

- The highest numbered queues are associated with meta-interface 0 which are for local delivery and exception traffic.

- The only difference between the two tables is that the UDP port number of the MI sockets are 22000 for slice A and 33000 for slice B.

| MIout | |||

|---|---|---|---|

| MIin | m0 | m1 | m2 |

| m0 | f0 | f1 | |

| m1 | f2 | f3 | |

| m2 | f4 | f5 | |

At the least, each slice will have six filters because there will be two filters for each meta-interface; i.e., one for each possible meta-interface destination. For example, traffic from m1 can go to m0 or m2. Although the general structure of the filters will be the same for both slices, the details will be different reflecting the difference between the endhosts used by each slice.

For example, the filter labeled f3 that forwards from m1 to m2 are forwarding traffic destined for host H3 for slice A and destined for host H4 for slice B. Since H3 and H4 have different IP addresses, the f3 filter for the two slices will be different.

So, how does the SPP make it appear that each slice has two dedicated 100 Mb/s paths through R even when traffic from both slices is coming in at the same time?

SPP Hardware Components

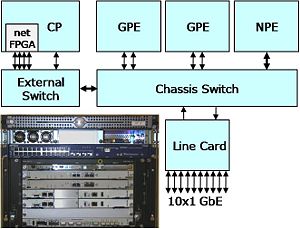

For a developer, the most important hardware components of an SPP are the Processing Engines (right). There are two types of Processing Engines (PEs): 1) a General-Purpose Processing Engine (GPE), and 2) a Network Processor Engine (NPE). A GPE is a conventional server blade running the standard PlanetLab operating system. A user can log into a GPE (using ssh) and can run processes that handle packets. An NPE includes two IXP 2850 network processors, each with 16 cores for processing packets and an xScale management processor. The NPE has a 10 GbE network connection and is capable of forwarding packets at 10 Gb/s.

All input and output passes through a Line Card (LC) which is an NPE that has been customized to route traffic between the external interfaces and the GPEs and NPEs. The LC has ten GbE interfaces, some of which will have public IP addresses while others will be used for direct connection to other SPP nodes.

The Control Processor (CP) configures application slices based on slice descriptions obtained from PlanetLab Central, a centralized database that is used to manage the global PlanetLab infrastructure. The CP also hosts a netFPGA, allowing application developers to implement processing in configurable hardware, as well as software.

[...[ FIGURE SHOWING 3 PKT PATHS ]...]

When we describe how the SPP processes packets, you will see that:

- For most packets, the main job of the LC is to forward packets to the NPE with the correct fastpath (FP).

- In most cases, packets going to the GPE are from the NPE although they can also come from the LC.

- Packets going to the GPE from an NPE are associated with a FP (e.g., IPv4 ICMP packet).

- Packets going to the GPE from the LC are going to an endpoint configured by a user that is NOT part of a FP.

Keeping these points in mind should make it easier to understand the partitioning of activities among SPP components.

Part I: IPv4 Packet Forwarding

Outer (PlanetLab) Headers And Inner Headers

The point to remember is that PlanetLab is an IPv4 overlay network in which the overlay is provided through IPv4 UDP tunnels. That is, a user begins with a subset of the IPv4 address space consisting of the sockets (IP address and port number) that define the tunnels. When implementing an experimental protocol in PlanetLab, the experimental protocol header is placed in the UDP packet. The result is that there are multiple network address spaces: 1) the whole Internet's address space; 2) PlanetLab's overlay address space; and 3) the user's address space.

[...[ FIGURE SHOWING ENCAPSULATION ]...]

For now, focus on slice A's traffic which is coming from socket (9.1.1.1, 11000) at H1 and going to socket (7.1.1.1, 5000) at H2. Conceptually, a transit packet coming to router R has the form (H, (H', D)) where H is the Planetlab (or outer) IP+UDP headers, H' is the inner IP+UDP headers, and D is the UDP data. The destination socket in H (outer headers) will reflect the UDP tunnel associated with m1 (MI 1); i.e., (9.3.1.1, 22000). While the source and destination sockets in H' (inner headers) will reflect the source and destination sockets of the application; i.e., (9.1.1.1, 11000) and (7.1.1.1, 5000).

Similarly, if we examine slice B's packets, the destination socket in H (outer headers) will be (9.3.1.1, 33000); i.e., the same IP address used by slice A but a different port number. The source and destination sockets in H' (inner headers) will be (9.2.1.1, 12000) and (7.1.1.2, 6000) which reflect the different application sockets used by slice B.

If we extend this example so that packets transit through multiple PlanetLab nodes, the outer headers H will change as packets pass through each node while the inner headers H' will remain fixed during transit. This situation is analogous to the case when an IP packet transits multiple ethernet networks where IP packets are encapsulated in an ethernet frame which changes as the packet moves through the networks but the IP packet header remains fixed.

If slice B in the example was experimenting with an ATM-like protocol instead of IPv4, the inner header H' would have a format that looked like an ATM header (with virtual circuit identifier) rather than IPv4+UDP headers.

In an SPP node, the outer and inner headers reflect two main concerns:

- The outer headers tell the SPP which virtual router should handle the packet.

- The inner and outer headers together tell the virtual router which interface should be used for forwarding.

It is this second point that distinguishes an SPP node from other types of PlanetLab nodes since other PlanetLab nodes only have one interface while an SPP node typically has multiple interfaces.

Forwarding A Packet

The main job of a router is to forward packets out the appropriate interfaces. A simple IPv4 router has a routing table with each entry containing a network address (the lookup key) and an interface (the lookup result). The router examines the destination address from an incoming packet, searches the routing table for the best matching key, and returns the corresponding interface to be used for forwarding.

Because the SPP houses multiple, virtual routers, an incoming packet is first directed to the appropriate virtual router component which then uses a forwarding table built from the user's filters to determine the appropriate queue (and therefore, meta-interface) to use. Furthermore, each virtual router has a fastpath and a slowpath which are handled by different hardware components (i.e., NPE and GPE respectively). For now, we focus on the fastpath.

[...[ FIGURE SHOWING PKT PATH ]...]

In our example, the SPP processes an incoming transit packet in the following way:

- LC(in): Determine the NPE from the outer headers and forward the packet to the NPE.

- The destination socket in the outer header identifies the NPE.

- NPE: Lookup the MI, create new outer headers, enqueue the packet for an MI and forward the packet to the LC.

- Before the NPE can do a lookup, it has to extract from the incoming packet all of the fields that are used during lookup.

- The lookup key includes a slice (or virtual router) identifier.

- LC(out): Add the ethernet header based on the outer headers and transmit the frame.

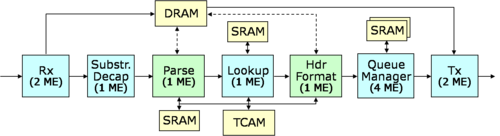

Now, consider the NPE (right) as it processes an incoming packet from the LC. The Rx block stores the packet in a DRAM buffer, creates a buffer descriptor in SRAM, and sends a meta-packet containing a buffer handle to the next block. A buffer handle is a convenient representation for both the buffer address and the buffer descriptor address.

The Decap block extracts some fields from the outer header and outputs some internal identifiers in preparation for the Parse block. For example, it determines the slice ID (sID), the incoming MI (rxMI), and the code option (copt). In our example, both slices will be using the IPv4 code option. But in general, the SPP supports multiple code options to handle different header formats.

The main part of forwarding is done by the Parse, Lookup and Header Format blocks. The Parse block forms the lookup key to be used in the Lookup block. The lookup key is primarily constructed from fields in the inner header. The Lookup block uses the TCAM to determine the disposition of the packet: the search result indicates the scheduling queue and the destination socket of the outgoing tunnel. The TCAM contains the filters for all slices. Each filter contains the fields defined by the user plus additional fields involving internal variables. The next section provides an example of the filter format. Then, the Header Format block creates the new outer header for the outgoing packet and sends a meta-packet to the Queue Manager.

Meanwhile, the Queue Manager is scheduling packets according to a Deficit Round Robin (DRR) algorithm to provide bandwidth sharing. When a packet should be transmitted, the Queue Manager sends a meta-packet to the Tx block. Finally, the Tx block sends the packet with its new outer header to the LC.

IPv4 TCAM Filter Format

To make the previous section more concrete, we describe the IPv4 TCAM filter format and present a small example that illustrates how multiple virtual routers can be supported. The section Part III: Multiple Protocol Support Example gives an example that illustrates how multiple protocols are supported.

[...[ FIGURE OF ABSTRACT TCAM ]...]

The TCAM is a parallel, hardware search engine with a forwarding table. It contains the filters for all slices in key-mask-result format. Each entry is based on the fields defined by users' filters plus internal fields such as the slice ID. The slice ID allows the Lookup block to restrict lookups to only those keys that are relevant to the packet being examined.

[...[ FIGURE OF TCAM IPv4 LOOKUP KEY ]...]

To the Lookup block, the TCAM table contains entries which consist of three generic bit strings: a 144-bit lookup key, a 144-bit mask and a 64-bit result. However, each slice places its own interpretation on top of these strings by selecting a code option. For example, the figure (right) shows the format of the key for the IPv4 code option. The IPv4 fields from a user's filter is shaded in blue, and the internal (substrate) fields are shaded in pink.

Note that although the fields shaded in pink are generated internally when the filter is installed, the if field is derived from the --key_rxmi (receiver meta-interface ID) field specified in a user's filter. For example, slice A might have the following for its f3 filter:

./fltr --cmd write_fltr --fpid 0 --fid 3 \

--key_type 0 --key_rxmi 1 --key_daddr 7.1.1.1 --key_saddr 0 --key_sport 0 --key_dport 5000 --key_proto 0 \

--mask_daddr 0xFFFFFFFF --mask_saddr 0 --mask_sport 0 --mask_dport 0xFFFF --mask_flags 0 \

--qid 0 --sindx 3 --txdaddr 11.1.16.2 --txdport 20000

which contains the argument --key_rxmi 1 refering to its own MI 1. The SPP converts this local label which refers to MI 1 to the if field in the key which is an internal MI identifier that is globally unique. In effect, the if value is an alias for MI 1's socket (9.3.1.1, 22000). The RX port field is the UDP port number of the input MI (22000 in this example).

The vlan field is the slice ID and is also the VLAN tag used for the slice's messages that travel between the LC and the NPE. Finally, the T field indicates with a 0 that it is a normal filter and with a 1 that it is a substrate-only filter (described later).

The section Part III: Multiple Protocol Support Example shows the format for a simple virtual circuit code option.

Every also has a 144-bit mask field with the same fields that are in the lookup key. The mask during match evaluation. Suppose K and M are the key and mask portions of a TCAM entry and K' is the key derived from the packet headers by the Parse block. Then, a TCAM entry matches a packet if (K' & M) = (K & M).

[...[ FIGURE OF TCAM IPv4 RESULT ]...]

The figure (right) shows the format of the result for the IPv4 code option.

XXXXX

Part II: Configuring the SPP

XXXXX

Part III: Multiple Protocol Support Example

XXXXX

In order to support a new protocol (i.e., code option), the Parse block must be updated to produce the correct lookup key for the Lookup block; and the Header Format block must be updated to understand the result from the Lookup block. The Lookup block requires no logic change since it uses a generic key-result format. Software running in a GPE on behalf of a slice can insert filters into an NPE using a generic interface that treats the filter as an unstructured bit string. Slice developers will typically provide more structured interfaces that are semantically meaningful to their higher level software, and implement those interfaces using the lower level generic interface provided by the SPP.