Dev:Forest - an overlay network for distributed applications

This page describes the high level architecture for the scalable network game system, focusing on the major system components, their interfaces and functionality. We envision several different implementations of the architecture, for different deployment contexts. These contexts include the Open Network Lab, the Supercharged PlanetLab Platform, Vini and Amazon EC2. Some of these (perhaps all) will be incomplete, but we want to maintain a reasonable degree of consistency across the platforms to minimize the amount of redundant work that will be required.

Contents

System Overview

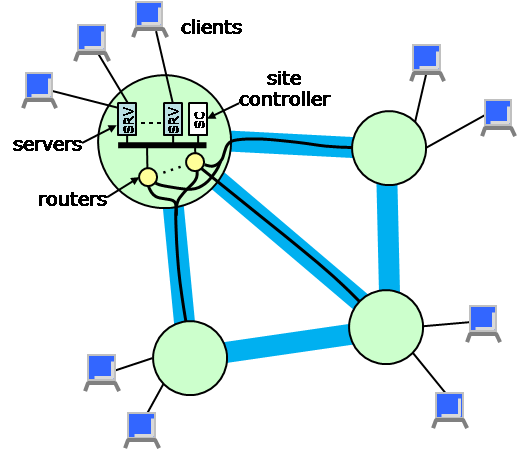

The network game system is distributed across multiple sites. Each site has a known geographic location and includes a multiplicity of processing resources of various types. In particular, each site has one or more servers and routers. Servers connect to remote users or clients over the Internet and communicate with other servers through the routers. Sites are connected by metalinks which have an associated provisioned bandwidth. Multiple routers at a site may share the use of a metalink going to another site, but must coordinate their data transmissions so as not to exceed its capacity. In addition, each site has a site controller which manages the site and configures the various other components as needed. Within a site, communication takes place through a switch component that is assumed to be nonblocking. These ideas are illustrated in the figure below. In the planned SPP deployment, each site will correspond to a slice on an SPP node (including a fastpath for the routing function and a GPE portion that implements the site controller functions), plus a set of remote servers which will be connected to the SPP node over statically configured tunnels.

In addition to the components shown in the diagram, there is a web site through which clients can register, learn about game sessions in progress and either join an existing session or start a new one. When the user requests to join a session, the system will select a server and return its IP address and a session id to the client, who will use these to establish a connection to the server and join the session.

Communication in the network occurs over multicast channels. Each channel is identified by a globally unique 32 bit value and has an associated set of components and links which form a multicast tree. Channels are used most commonly to distribute state update information for game sessions. In this case packets are delivered to all servers that belong to the session. However, channels can also be used by the system to distribute control information of various sorts. Packets can be sent to specific components on a channel, or to sets of components (e.g. all site controllers). In general, the links in a channel can carry data in either direction enabling many-to-many communication. The first 1024 channels are reserved for various system control purposes and cannot be used for game sessions.

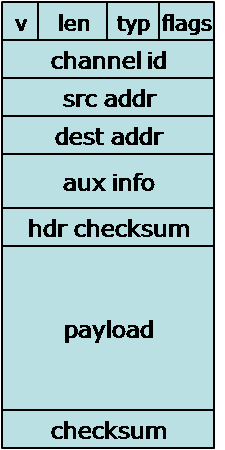

The various system components communicate by sending packets of various types. The common packet format is shown at right and the fields are described below. Specific protocols used by the various components define specific packet types and additional fields. These are described in the specific sections dealing with those protocols.

- Version (4 bits). This field defines the version of the protocol suite. The current version is 1.

- Length (12 bits). Specifies the number of bytes in the packet. Must be divisble by 4 and can be no larger than 1400.

- Protocol (4 bits). This field defines the protocol that the packet is associated with. The currently defined protocols are

- 0 - Session Control and Operation

- 1 - Network Status

- Type (12 bits). This field defines the type of the particular packet. Each protocol defines its own set of types.

- Channel id (32 bits). The channel id is a 32 bit identifier that identifies a specific communication channel on which the packet is being sent. Each channel is associated with a subset of the network links that collectively form a tree.

- Source Address (32 bits). This specifies the address of the component that sent the packet.

- Destination Address (32 bits). This specifies the address of the component to which the packet is being sent.

- Checksum (32 bits). Computed over the entire packet.

All system components have addresses which serve as concise machine-readable identifiers and provide location information. The first 16 bits of each 32 bit address identifies a site, and the remaining 16 bits identify a component associated with that site. The site component of the address is called the site id and the remainder is called the component number. Components with addresses includes servers and routers. Clients interact with the system only through its web interface and through their IP connections to servers, so do not require netGame addresses.

Addresses with a site number of 0 are reserved for special purposes. Here we list some of the special addresses and their interpretations.

- 0 - Network master controller

- 100 - All servers on channel.

- 101 - All subscribed servers on channel.

- 201 - All routers on channel.

- 202 - Next-hop router on channel.

- 203 - All edge routers on channel.

- 204 - First edge router on channel.

- 300 - All site controllers, for sites on channel.

- 301 - Next-hop site controller on channel.

- 302 - Root site controller.

The routers maintain routing tables for forwarding packets within channels. The channel routing table at a site typically contains an entry for all other sites that are on the channel, and an entry for all components within the site that are on the channel. These entries provide the information needed by the router to make local routing decisions. Routing table entries can be statically configured or "learned". In learning mode, the source address A of a packet arriving on a channel is used to infer how to forward packets addressed to A.

Session Control and Operations

The session control and operations protocol manages all the activities that are specific to an individual game session. It defines a number of specific packet types, which are described briefly below.

- 0 - State update. This packet is used to communicate status information about dynamic objects in the game world to other servers. Packets of this type should be addressed to "all subscribed servers". The payload of the packet includes the identity of the object whose status is being updated, the region of the game world in which the object is currently located and a list of parameter/value pairs giving the current value of the specified parameter.

- 1 - Subscription update. This is used by servers to request changes to their region subscriptions. Packets of this type should be addressed to the "first edge-router". The payload contains two lists of up to 255 values of 16 bits each. The first specifies specifies regions to be added to the subscription list. The second specifies regions to be dropped. The first 16 bits of the payload gives the lengths of the two lists.

- 2 - Subscription report. This is sent periodically by routers to servers and gives the router's current view of the regions to which the server is subscribed. This is provided to allow servers to recover from lost subscription update packets. The payload simply lists all the regions (as 16 bit values) that the server is subscribed to.

- 3 - Object query. This is used to request the current state of a particular object in the game world. Packets of this type are addressed to a specific server.

- 4 - Region query. This is used to request the current state of all objects in the game world that belong to a particular region. Packets of this type should be addressed to "all subscribed servers".

- 5 - Query reply. This is used to respond to a prior query request. Packets of this type should be addressed to the specific server that sent the initial query.

Network Status

This section summarizes the major system level data structures. The first three items below (client data, connection data and session data) are stored in a distributed hash table, with each site responsible for a portion of the key space in the DHT. The key space covered by each site is known by the other sites, allowing one-hop access to data in the DHT.

Client data

- User name (key)

- User preferences

- Accounting records

- Current sessions

Session data

- Session id (key)

- Users in the session (specified in terms of client addresses)

- Multicast tree - sites, inter-site links with reserved capacities

The network status information logically forms a graph, with data associated with its nodes and links. Each site maintains the authoritative information about itself and its incoming links, but each site also maintains a complete copy of the global network status. The status information is distributed across a multicast spanning tree of the network, with each node periodically sending a copy of its current status on the multicast tree. The construction of the multicast spanning tree is carried out by a distributed spanning tree maintenance algorithm.

Network Status

- List of sites with up/down status, provisioned capacity (in terms of client sessions) and available capacity.

- Inter-site links with up/down status, provisioned capacity and available capacity.

SPP Deployment

This section discusses some specifics of the planned SPP deployment. Part of the reason to do this here is to verify that the overall framework we are creating makes sense for the SPP (and similarly for other deployment scenarios). We focus here on the fast path component. For demo purposes, we will most likely configure the fast path manually by logging into a slice on the GPE and configuring filters as needed.

We note that implementation on the SPP requires version 2 of the NPE, since version 1 does not support multicast. Implementing a fast path requires that we develop a new code option, including a parsing component and a header formatting component. The Parsing component simply extracts from the netGames packet header the fields that are to be used in the lookup key. These include the protocol and type fields, plus the channel id, the destination address and for state update packets, the region number. This is a total of 96 bits. The TCAM lookup produces a resultsIndex and a statsIndex. The resultsIndex is used to obtain the forwarding information for the packet, the statsIndex specifies a statistics counter. Each channel id that is active at an SPP will have its own statsIndex, so that we can monitor the traffic sent on the channel. The resultsIndex is used in a second table lookup. For multicast packets, the result of the table lookup includes the fanout, and for each copy, a queue id, the IP destination address of the outgoing packet, and the source and destination UDP port numbers.

Changing the TCAM requires control interactions through the substrate. That is, code running on a GPE must request new filters through the RMP, which talks to the xScale, which changes the TCAM entry. This will be relatively slow. For region-based filtering, we would like to make things more responsive. This seems to require some special processing in either the parse or header format blocks, the two places where we can place slice-specific code (or both).