Difference between revisions of "Using the IPv4 Code Option"

| Line 115: | Line 115: | ||

* Install IPv4 filters. | * Install IPv4 filters. | ||

* Monitor traffic. | * Monitor traffic. | ||

| + | |||

| + | >>>>> END EXTRA STUFF <<<<< | ||

== Utilities and Daemons == | == Utilities and Daemons == | ||

| − | Several utilities and daemons are used in the example: | + | Several utilities and daemons are used in the IPv4 example: |

* scfg (slice configuration) | * scfg (slice configuration) | ||

** Used to get SPP information, reserve resources, configure queues and allocate/free resources | ** Used to get SPP information, reserve resources, configure queues and allocate/free resources | ||

| + | * sliced (slice statistics daemon) | ||

| + | ** Used to process statistics monitoring requests | ||

* ip_fpc (IPv4 filter configuration) | * ip_fpc (IPv4 filter configuration) | ||

** Used to create IPv4 filters | ** Used to create IPv4 filters | ||

* ip_fpd (IPv4 daemon) | * ip_fpd (IPv4 daemon) | ||

** Used to create an IPv4 fastpath and process IPv4 local-delivery and exception traffic | ** Used to create an IPv4 fastpath and process IPv4 local-delivery and exception traffic | ||

| − | |||

| − | |||

The executables are in the directory /usr/local/bin/ on each SPP slice. | The executables are in the directory /usr/local/bin/ on each SPP slice. | ||

| + | |||

| + | Note that only the last two are specific to the IPv4 code option. | ||

| + | Both ''scfg'' and ''sliced'' can be used with any code option. | ||

| + | It is worth noting now that if you want to develop a new code option, you would want to provide a utility for configuring filters and a fastpath daemon for your code option. | ||

| + | You can probably do this by modifying the code for the IPv4 fastpath. | ||

| + | |||

| + | >>>>> HERE <<<<< | ||

== Example == | == Example == | ||

| Line 165: | Line 174: | ||

The exercises and their discussion explore these extensions further. | The exercises and their discussion explore these extensions further. | ||

| + | |||

=== Preparation === | === Preparation === | ||

Revision as of 16:44, 17 March 2010

Contents

Introduction

An SPP is a PlanetLab node that combines the high-performance and programmability of Network Processors (NPs) with the programmability of general-purpose processors (GPEs). The Hello GPE World Tutorial page described how to use a GPE. This page describes the SPP's fastpath (NP) features using the IPv4 code option as an example. Those features include:

- Bandwidth, queue, filter and memory resources

- Logical interfaces (meta-interfaces) within each physical interface

- Packet scheduling queues and their binding to meta-interfaces

- Filters for forwarding packets to queues

Like any PlanetLab node, the SPP runs a server on each GPE that allows a user to allocate a subset of a node's resources called a slice. Although an SPP user can prototype a new router by writing a socket program for the GPE, the SPP's high performance can only be tapped by using a SPP's NP. That is, use a fastpath-slowpath packet processing paradigm where the fastpath uses NPs to process data packets at high speed while the slowpath uses GPEs to handle control and exception packets. The SPP's IPv4 code option is an example of this fastpath-slowpath paradigm.

This page describes a simple IPv4 code option and in doing so, illustrates the fastpath-slowpath paradigm that would be in any high-speed implementation. XXXXX SOMETHING GOES HERE XXXXX

Configuring the SPP to use the fastpath involves these steps:

- Reserve resources

- Create a fastpath (FP)

- Create one or more fastpath endpoints (meta-interfaces)

- Create packet queues and bind each queue to a meta-interface

- Install filters to direct incoming packets to queues

A fastpath creation request specifies your desire for SPP resources such as interface bandwidths, queues, filters and memory. Once you are granted those resources, you define meta-interfaces within the fastpath and structure meta-interfaces and resources for packet forwarding.

The SPP Fastpath

A network of nodes containing SPPs is formed by connecting the nodes with UDP tunnels between Internet interfaces. This network forms a substrate which can carry packets belonging to several overlay networks. The nodes can be SPPs, hosts (PlanetLab and non-PlanetLab), or any packet processors that support this paradigm. A UDP tunnel has two endpoints, each defined by an (IP address, UDP port number) pair. Note that the IP address is from the addressing domain of the Internet, not the overlay.

A slice creates one or more endpoints for communication. An SPP endpoint is a pair (IP address, port number) where the IP address is the address of one of the SPP's physical interfaces. Furthermore, an endpoint has an output bandwidth guarantee. For example, a slice could define (64.57.23.210, 19000) to be a 100 Mbps endpoint at the Salt Lake City SPP since 64.57.23.210 is the IP address of the SPP's interface 0. There is one caveat: the endpoint must be unique among all active slices using that interface. Since each slice will have its own unique set of endpoints, the SPP can support concurrent overlay networks.

The SPP makes a distinction between slowpath and fastpath endpoints. Although they are both identified through an (IP address, port number) pair and have a bandwidth guarantee, a fastpath endpoint can only accept packets encapsulated in an IP/UDP packet while a slowpath endpoint can accept any IP-based protocol. Furthermore, packets arriving to a slowpath endpoint are sent to a GPE, but ones arriving to a fastpath endpoint are sent to the NPE. A fastpath endpoint is often called a meta-interface.

A packet that travels through a network of SPPs has an outer (Internet) header, an inner (overlay) header and a payload (packet content); i.e., a slice's packet is encapsulated in an IP/UDP packet. If an SPP has been configured to process the packet using the fastpath, the packet is sent to the NPE where the outer header is removed to expose the overlay packet. The NPE processes the overlay packet and encapsulates the packet in an IP/UDP packet before sending the packet out of one of its interfaces. In the case of an IPv4 overlay, an IPv4 packet is encapsulated inside another IPv4 packet.

Since the primary function of a router is to forward incoming packets to the next destination (or next hop), a fastpath slice has to have enough meta-interfaces to accept packets from its neighboring nodes and forward them to the next-hop nodes.

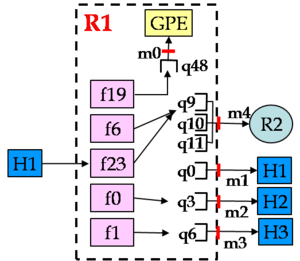

The figure (right) shows some of the paths that a packet from H1 can take through the fastpath of the R1 router. For simplicity, the diagram doesn't show resources used by packets from other nodes. The figure shows these fastpath features:

- There are five meta-interfaces labeled m0-m4.

- The blocks labeled f19, f6, f23, f0 and f1 are filters that direct slice packets to queues q48 q9, q3 and q6. Queues q10, q11 and q0 are not used by packets coming from H1).

- More than one queue (e.g., q9, q10, q11) can be bound to one meta-interface.

Fastpath Filters

A filter directs matching packets to a queue in the fastpath. Since a queue is bound to one meta-interface, a filter effectively forwards packets from an input meta-interface (fastpath endpoint) to an output meta-interface.

A filter has two parts: a match criteria and an action. The match criteria is used to select packets, and the action indicates what to do with matching packets. A filter is applied to the header of overlay packets; i.e., after the outer packet header has been removed. The match criteria is used to examine both the incoming meta-interface and fields from the overlay packet header. The action is used to indicate whether a matching packet should be dropped or where to enqueue the packet (the QID). It also indicates which statistics counter group should be updated and the next-hop address that should be used in the outer header.

The entire set of filters forms the router's forwarding table.

Fastpath Queues

A fastpath queue provides packet buffering capacity. But it also provides guaranteed transmission bandwidth. And if there is more than one queue bound to a meta-interface, the bandwidth capacity of the meta-interface is proportionately shared by the queues according to their bandwidth guarantees. A slice can set the buffering capacity through the threshold parameter and the guaranteed bandwidth through the bw parameter.

As you can see in the figure (above), each queue is bound to one meta-interface, and each meta-interface can have more than one queue. Users reserve a number of queues N before use of the SPP where 1 ≤ N ≤ #free ≤ 65,536. Also, each queue has a unique ID (QID) chosen between 0 and N-1.

>>>>> EXTRA STUFF BELOW <<<<<

The example below shows additional features:

- The GPE can inject packets into the FP.

- Exception packets (e.g., bad slice packet header) are sent to the GPE for further processing.

The example also shows how to:

- Reserve FP resources and then create a FP for the IPv4 code option.

- Create FP endpoints (meta-interfaces (MIs)) with bandwidth guarantees.

- Create and configure queues with drop thresholds and bandwidth guarantees.

- Bind queues to MIs.

- Install IPv4 filters.

- Monitor traffic.

>>>>> END EXTRA STUFF <<<<<

Utilities and Daemons

Several utilities and daemons are used in the IPv4 example:

- scfg (slice configuration)

- Used to get SPP information, reserve resources, configure queues and allocate/free resources

- sliced (slice statistics daemon)

- Used to process statistics monitoring requests

- ip_fpc (IPv4 filter configuration)

- Used to create IPv4 filters

- ip_fpd (IPv4 daemon)

- Used to create an IPv4 fastpath and process IPv4 local-delivery and exception traffic

The executables are in the directory /usr/local/bin/ on each SPP slice.

Note that only the last two are specific to the IPv4 code option. Both scfg and sliced can be used with any code option. It is worth noting now that if you want to develop a new code option, you would want to provide a utility for configuring filters and a fastpath daemon for your code option. You can probably do this by modifying the code for the IPv4 fastpath.

>>>>> HERE <<<<<

Example

The figure (right) shows the main parts of the IPv4 slice described below. The symbols S, D, S' and D' are used to denote hosts and the traffic processes running on those hosts. The example includes one SPP (labeled R) which includes the GPE host D', and three other hosts (labeled S, S' and D). There are two traffic flows: 1) a unidirectional flow from S to D; and 2) a bidirectional flow between S' and D'. The slice traffic between S' and D' is ping traffic (ICMP echo request packets from S' to D', and ICMP echo reply packets from D' to S').

The IPv4 slice uses four slice interfaces (m0, m1, m2, and m3), four queues (q0, q1, q8 and q9) and three filters (f0, f1 and f2). In the flow between S' and D':

- An incoming packet containing an IPv4 ping packet from S' is stripped of its outer header and directed to queue q8 by filter f0.

- The ip_fpd daemon process (labeled as D') running on a GPE reads the echo request packet from queue q8, forms an echo reply packet, and injects the packet into the FP.

- The echo reply packet will be placed into queue q0 by filter f1.

- The packet is encapsulated into an IP/UDP packet and sent out MI 1.

- Finally, the encapsulated ICMP echo reply packet arrives back at S'.

In the flow from S to D:

- An incoming slice packet from S to MI m2 is stripped of its outer header and directed to queue q2 by filter f2.

- The packet is encapsulated into an IP/UDP packet and sent out MI m3.

- Finally, the encapsulated IP/UDP packet arrives at D.

This example will show the basics of using the IPv4 slice. It can be easily extended in a number of ways:

- Include more flows and a greater variety of flows.

- Add filters to provide more discrimination for packet forwarding.

- Add queues and configure filters to give some flows preferential treatment.

- Add another SPP and more hosts to form a larger network.

The exercises and their discussion explore these extensions further.

Preparation

XXXXX getting files, etc

Running the Example (Overview)

XXXXX

Setting up the SPP to use the fastpath resembles the procedure used to setup the slowpath in The Hello GPE World Tutorial but with several additional steps:

- Run the mkResFile4IPex.sh script to create a resource reservation file.

- Run the setupIPex.sh script to configure the SPP which includes:

- Submit the resource reservation.

- Claim the resources described by the reservation file.

- Setup a slowpath endpoint for monitoring traffic

- Start the ip_fpd fastpath daemon

- Create all of the fastpath queues and set their drop and scheduling parameters.

- Create all of the filters for packet forwarding.

In the six steps listed above in the setup script, only the last three are completely specific to the fastpath. Although the details of the first three differ from the slowpath setup, the basic idea is the same.

Create a Resource Reservation File

As in The Hello GPE World Tutorial, you can hand edit a res.xml file or run a script to generate a reservation file for this example. See the reservation file ~/ipv4-fp/res.xml and the script ~/ipv4-fp/mkResFile4IPex.sh. The res.xml file for the Salt Lake City SPP looks like this:

<?xml version="1.0" encoding="utf-8" standalone="yes"?>

<spp>

<rsvRecord>

<!-- Date Format: YYYYMMDDHHmmss -->

<!-- That's year, month, day, hour, minutes, seconds -->

<rDate start="20100310150100" end="20100410150100" />

<!-- fastpath -->

<fpRSpec name="fp9">

<fpParams>

<copt>1</copt>

<bwspec firm="100000" soft="0" />

<rcnts fltrs="10" queues="10" buffs="1000" stats="10" />

<mem sram="4096" dram="0" />

</fpParams>

<ifParams>

<ifRec bw="20000" ip="64.57.23.210" />

<ifRec bw="20000" ip="64.57.23.214" />

<ifRec bw="20000" ip="64.57.23.218" />

</ifParams>

</fpRSpec>

<!-- slowpath -->

<plRSpec>

<ifParams>

<!-- reserve 5 Mb/s on one interface -->

<ifRec bw="5000" ip="64.57.23.210" />

</ifParams>

</plRSpec>

</rsvRecord>

</spp>

This reservation file includes not only a slowpath component (plRSpec), but also a fastpath component (fpRSpec).

The fastpath component contains the following:

- The fastpath name is fp9 and is used when claiming the resources.

- The fastpath parameters are:

- Code option 1 which denotes IPv4.

- A guaranteed aggregate bandwidth of 100,000 Kbps (i.e., 100 Mbps) which the user can divide among the interfaces listed in the ifParams section of the reservation.

- Maximum resource counts of 10 filters, 10 queues, 1000 buffers and 10 stats indices which indicate the maximum number of each resource type to be used.

- Maximum memory of XXXXX which XXXXX

- The interface parameters are:

- The guaranteed bandwidths of 20,000 Kbps that will be taken from the capacities of the three interfaces 64.57.23.210, 64.57.23.214 and 64.57.23.218.

Resource identifiers (e.g., filter identifier) are numbered from 0 to N-1 where N is the maximum number of declaried resources. For example, since there is a maximum of 10 filters, the filter identifiers that the user can user are 0 to 9 inclusive. The maximum number of queues also indicates that the QIDs (queue identifiers) of the two special queues for error (exception) packets and local delivery packets are N-1 and N-2 respectively. In our example, that means that queue 9 will be used for error packets and queue 8 will be used for local delivery packets.

Note that the sum of the guaranteed bandwidths of the three interfaces is only 60 Mbps and is less than the 100 Mbps of the entire fastpath. The only requirement is that the total of all interface bandwidths can not exceed the fastpath's aggregate bandwidth parameter.

Unlike the example in The Hello GPE World Tutorial where the slowpath endpoint was used to process packets, the slowpath endpoint here will be used for traffic monitoring data.

Setup the SPP

The setupIPex.sh script is used to make a reservation and configure the SPP for this example. Below is a sketch of the script showing the utility/daemon used and the general form of the commands:

scfg --cmd make_reserv # Make reservation

scfg --cmd claim_resources # Claim resources

scfg --cmd setup_sp_endpoint ... # Setup SP endpoint (traffic monitoring)

ip_fpd -fpName fp9 ... > ip_fpd.log & # Create FP; daemon continues to handle LD&EX pkts

scfg --cmd set_queue_params ... # Set queue params for LD&EX queues

## REPEAT FOR ALL FP endpoints:

scfg --cmd setup_fp_tunnel ... # Setup MI,

scfg --cmd bind_queue ... # Bind queue to MI,

scfg --cmd set_queue_params ... # Set queue params

## REPEAT FOR ALL filters:

ip_fpc --cmd write_fltr ... # Create filter

Now, let's look at the script in detail.

Reserve and Claim Resources

scfg --cmd make_reserv --xfile res.xml # Make reservation

scfg --cmd claim_resources # Claim resources

- res.xml is the reservation file we created earlier.

- claim_resources obtains the slowpath and fastpath resources specified in the current reservation.

XXXXX

Create the Slowpath Endpoint for Monitoring

scfg --cmd setup_sp_endpoint --bw 1000 --ipaddr 64.57.23.210 --port 3551 --proto 6

- We will not use this endpoint until the section #Monitoring Traffic.

- guaranteed bandwidth: 1000 Kbps

- use slowpath endpoint (64.57.23.210, 3551) for monitoring

- sliced command: "sliced --ip 64.57.23.194 --port 3551 &"

- sliced sends counter values in TCP packets to the remote SPPmon GUI

- 64.57.23.210 is the IP address of the Salt Lake City SPP's interface 0

XXXXX

Create a Fastpath Instance and the ip_fpd Daemon

ip_fpd --fpName fp9 --myIP 9.9.2.249 --myPort 5556 > ip_fpd.log &

- Run the ip_fpd daemon on a GPE in the background

- Claims fastpath resources

- Tells RMP to load and initialize the IPv4 code option

- Daemon handles local delivery and error packets in the background

- Gets packets from two queues with the highest QIDs through the vserver

- Local delivery packets: Packets addressed to the fastpath's IP address.

- ip_fpd can inject a response packet back into the fastpath

- Error packets: Packets flagged by the fastpath as errors

- e.g., TTL expired (ip_fpd generates ICMP packet)

- fp9 is the name of the fastpath in the res.xml reservation file

- The IP address of the fastpath slice is 9.9.2.249

- Port: XXX

Note:

- a filter puts local delivery packets into the local delivery queue

- another filter directs the slowpath response to another queue

- the fastpath code option puts error packets into the error queue

- a vserver passes the local delivery and error packets to the ip_fpd daemon

XXXXX

Configure the Local Delivery and Error Queues

scfg --cmd set_queue_params --fpid 0 --qid 9 --threshold 100 --bw 1000

scfg --cmd set_queue_params --fpid 0 --qid 8 --threshold 100 --bw 1000

- If the reservation file specifies a maximum of N queues, queue N-1 is used for error packets and queue N-1 is used for local delivery packets.

- We requested 10 queues. So, queue 9 is for error packets, and queue 8 is for local delivery packets.

- The two queues are created by the ip_fpd daemon during intialization.

- These two commands set the drop threshold (100 packets) and guaranteed bandwidths (1000 Kbps) of those two queues

>>>>> HERE <<<<<

XXXXX

Create Tunnels

scfg --cmd setup_fp_tunnel --fpid 0 --ipaddr 64.57.23.210 --port 19001 --bw 10000

- Need to create a fastpath endpoint for:

- Local delivery packets (packets addressed to this router) and their responses

- Incoming transit packets

- Outgoing transit packets

- The example uses four interfaces (shown as m0, m1, m2, m3)

- --fpid: Fastpath ID is always 0 (for now)

- --ipaddr, --port: fastpath endpoint

- --bw: guaranteed bandwidth of 10,000 Kbps

Repeat for (64.57.23.214, 19001) and (64.57.23.218, 19001).

XXXXX

Bind and Configure Queues

scfg --cmd setup_fp_tunnel --fpid 0 --ipaddr 64.57.23.210 --port 19001 --bw 10000

scfg --cmd bind_queue --fpid 0 --miid $? --qid_list_type 0 --qid_list 0

scfg --cmd set_queue_params --fpid 0 --qid 0 --threshold 100 --bw 1000

- Queues

- each queue is bound to a fastpath endpoint using scfg --cmd bind_queue ...

- a queue's parameters is set using scfg --cmd set_queue_params ...

- user resrves a max # of queues N before use

- 1 <= N <= #free <= 65,536

- each queue has a unique ID (0 to N-1) or QID

- bind_queue arguments

- --fpid: fastpath ID (always 0 for now)

- --miid: meta-interace ID returned from setup_fp_unnel

- --qid_list_type: 0 means QIDs will be listed individually; 1 means QIDs are in a range

- --qid_list: the QID

- set_queue_params arguments

- --fpid: as above

- --qid: the QID

- --threshold: capacity (#pkts)

- --bw: guaranteed minimum output rate (Kbps)

- can transmit faster if no competition for output interface

XXXXX

Create IPv4 Filters

A filter is used to direct matching packets to a queue. Since each queue is bound to one meta-interface (fastpath endpoint), a filter effectively forwards matching packets form in input endpoint to and output endpoint via a queue.

There are three filters shown in the diagram:

- Filter f0 directs local delivery packets arriving on meta-interface m1 to queue q8 which will be sent to the ip_fpd daemon via the vserver.

- Filter f1 directs response packets from ip_fpd to queue q0 for transmission back to the sending process S'.

- Filter f2 directs packets arriving on meta-interface m2 from process S to queue q1 before being transmitted out of meta-interface m3 to the receiving process D.

Although we have labeled the filters as f0, f1 and f2, the queues as q0, q1 and q2, and the meta-interfaces as m0, m1, m2 and m3, they are assigned non-negative IDs in the configuration script. The labeling was chosen such that the actual ID assigned in the configuration script is the integer part of the label; e.g., queue q2 corresponds to QID 2.

A typical filter for any overlay packet has two parts:

- The match criteria which specifies:

- the input endpoint

- the header fields from the overlay packet

- The packet disposition which specifies:

- should the packet be dropped

- if not dropped, where should the packet be enqueued (the QID)

- which counter group to update

- the next-hop endpoint where to send a packet

The entire set of filters forms the router's forwarding table. Note that the packet disposition part is independent of the code option. But the match criteria does depend on the code option. For example, when using the IPv4 code option, the header fields from the overlay packet would involve the 5-tuple from the IPv4 fastpath header fields (e.g., destination IP address and port number).

To install an IPv4 fastpath filter, use the ip_fpc (IP fastpath configuration) utility with the --cmd write_fltr command. The match criteria has two parts: a key and a mask. So, an IPv4 filter has this form:

ip_fpc --cmd write_fltr --fpid 0 --fid I --keytype T \

... Match Criteria Key ... \

... Match Criteria Mask ... \

... Result ...

where:

- --fpid 0 indicates fastpath 0 (for now, that is the only option)

- --fid I sets the filter ID to I

- If you set aside N filters in your reservation file, I is a non-negative integer between 0 and N-1.

- --keytype T distinguishes between normal filters (0) and bypass filters (1). Most filters are normal filters.

- The mask is used to indicate which parts of the key should be ignored during matching.

For example, the IPv4 filter f2 (line numbers have been added to clarify exposition and are not part of the filter) is shown below:

1 ip_fpc --cmd write_fltr --fpid 0 --fid 2 --keytype 0 \ 2 --key_rxmi 2 \ 3 --key_daddr 9.9.1.1 --key_saddr 0 --key_sport 0 --key_dport 0 --key_proto 0 \ 4 --mask_daddr 0xFFFFFFFF --mask_saddr 0 --mask_dport 0 --mask_sport 0 --mask_flags 0 \ 5 --qid 1 --sindx 2 --txdaddr 128.252.153.98 --txdport 19000

The match criteria is in lines 2-4, and the disposition (result) is in line 5. The match criteria consists of the key in lines 2-3, and the mask in line 4. The mask is used to indicate which parts of the key should be ignored during matching.

In lines 1-2, the arguments are:

- --fpid 0: The fastpath ID is 0 (which, for now, is the only option).

- --fid 2: The filter ID is 2.

- --keytype 0: The filter is normal, the most typical filter type. Other values are described later.

- --key_rxmi 2: The packet arrived to meta-interface 2.

Note that each key field (e.g., --key_daddr K) except for --key_rxmi has a corresponding match field (e.g., --mask_daddr M). A packet field F' matches a filter field F for a mask M if (F' & M) = (F & M) where '&' is the AND operator. So, any packet field F' will match a filter field F if its mask field has a value of 0. And a filter matches a packet if every filter field matches the corresponding packet header field.

So, the filter above matches any packet with a destination IP address of 9.9.1.1 (--key_daddr 9.9.1.1). The complete IP address must match since the mask is 0xFFFFFFFF which will select every bit from the destination IP address.

For now, we ignore the --mask_flags fields except to say that it is used to match on TCP flags in TCP header fields.

Finally, line 5 indicates the packet disposition:

- --qid 1: enqueue the packet onto queue 1

- --sindx 2: update counter group 2

- --txdaddr 128.252.153.98 --txdport 19000: encapsulate the packet for transmission to the fastpath endpoint (128.252.153.98, 19000)

The filter f1 directs packets arriving to meta-interface m1 to queue q1:

ip_fpc --cmd write_fltr --fpid 0 --fid 0 --keytype 0 \

--key_rxmi 1 \

--key_daddr 0 --key_saddr 0 --key_sport 0 --key_dport 0 --key_proto 0 \

--mask_daddr 0xFFFFFFFF --mask_saddr 0 --mask_dport 0 --mask_sport 0 --mask_flags 0 \

--qid 8 --sindx 0 --res_ld --txdaddr 0 --txdport 0

This filter is identical to filter f2 except:

- The filter ID is 0 (instead of 2).

- The input meta-interface key is 1 (--key_rxmi 1) instead of 2.

- The destination address key (--key_daddr) is 0 instead of 9.9.1.1.

- The QID is 8 (--qid 8) instead of 1.

- The stats index is 0 instead of 2.

- There is an extra field --res_ld that means that the packet should be marked as a local delivery packet and that the --txdaddr and --txdport fields should be ignored.

The filter f1 directs packets coming from the GPE back to queue 0 before being transmitted back to sender S':

ip_fpc --cmd write_fltr --fpid 0 --fid 1 --keytype 1 \

--key_rxmi 0 \

--key_daddr 128.252.153.99 --key_saddr 0 --key_sport 0 --key_dport 0 --key_proto 0 \

--mask_daddr 0xFFFFFFFF --mask_saddr 0 --mask_dport 0 --mask_sport 0 --mask_flags 0 \

--qid 0 --sindx 1 --txdaddr 128.252.153.99 --txdport 99999

This filter is identical to filter f2 except:

- The keytype is 1 (--keytype 1) indicating a bypass filter.

- The filter ID is 1 (instead of 2).

- The input meta-interface key is 0 (--key_rxmi 0) instead of 2. Note that meta-interface is always the interface between the slowpath and the fastpath.

- The destination address key (--key_daddr) is 128.252.153.99 instead of 9.9.1.1.

- The QID is 0 (--qid 0) instead of 1.

- The stats index is 1 instead of 2.

- The --txdaddr and --txdport fields are different.

A bypass filter means that the packet should bypass the classification step. We want to avoid classification because the filter is intended for packets whose destination UDP tunnel has already been determined XXXXX.

XXXXX

XXXXX setup script setupFP1.sh

- MIs

- Implicit MI 0 for LD and EX

- 1 for each FP EP plus MI 0 (LD, EX)

- Filters

- direct incoming packet to queue

- For LD: "--txdaddr 0 --txdport 0 --qid 0"

- Queues

- each queue is bound to an MI

- each MI can have 1 or more queues

- q$N for EX and q${N-1} for LD where $N is #queues

- pkt scheduling: weighted fair queueing

Send Traffic to a Fastpath Slice

XXXXX

Teardown the SPP

XXXXX teardown script teardownFP1.sh

Monitoring Traffic

XXXXX

Preparation

XXXXX

Setup the Monitor Endpoint

scfg --cmd setup_sp_endpoint --bw 1000 --ipaddr 64.57.23.210 --port 3551 --proto 6

- guaranteed bandwidth: 1000 Kbps

- use slowpath endpoint (64.57.23.210, 3551) for monitoring

- sliced command: "sliced --ip 64.57.23.194 --port 3551 &"

- sliced sends counter values in TCP packets to the remote SPPmon GUI

- 64.57.23.210 is the IP address of the Salt Lake City SPP's interface 0

Start SPPmon

XXXXX

Send Traffic

XXXXX

Exercises

XXXXX